The arrest of a junior US air national guardsman for taking and sharing classified military intelligence is less a story about data theft or even war. It’s a story about how sensitive information, when leaked to the wrong hands, has the power to shape history – for better or worse.

For federal, defense and critical infrastructure entities, this case should serve as a familiar call to action to redefine risk from the inside out, and to prioritize proactive mitigation before the next train bolts.

For Background

The FBI arrested 21-year-old Jack Teixeira on Thursday for leaking classified Pentagon files containing national security secrets, including intelligence about the Ukraine war.

Teixeira, who will be charged under the Espionage Act, shared the files to an online gaming chatgroup called Thug Shaker Central. Many of the sensitive documents later surfaced on Twitter and Telegram, quickly catching the eye of the intelligence community and Federal Justice Department.

The arrest has reignited the conversation about access control to potentially damaging intelligence, sparking a raft of questions: How was a junior staffer able to access such sensitive information? Why weren’t adequate security controls implemented? Were those controls tried and tested? Did Teixeira act with ill intent? Was Teixeira even aware of the gravity and ramifications of what he was doing in sharing the files online?

History Repeats

The unfortunate truth is that this is not the first time a leak of such gravity has occurred. A similar storm unfolded in 2010 when former army solider Chelsea Manning (Bradley Manning at the time) was convicted for leaking classified information to Wikileaks. In 2013, government contractor Edward Snowden shared classified documents about government surveillance with journalists. Both events had a significant impact of the perception of employees and contractors in the federal space and paved the way for former President Barack Obama’s Executive Order (EO), establishing the National Insider Threat Task Force (NITTF).

As part of the EO, Obama made it mandatory for federal entities with classified networks to establish insider threat detection and prevention programs.

“The primary mission of the NITTF is to develop a government-wide insider threat program for deterring, detecting, and mitigating insider threats, including the safeguarding of classified information from exploitation, compromise, or other unauthorized disclosure, taking into account risk levels, as well as the distinct needs, missions, and systems of individual agencies.”

Twelve years have passed since the order was enforced, so the question must be asked, why is classified information still being leaked? Perhaps a more constructive question to ask is, where are federal insider threat programs falling short?

Letting the Cat Out of the Bag; When it’s Out, it’s Out

One cannot address insider risk or threat management without addressing human behavior and, in particular, motive (intent).

On the one hand, motive is irrelevant. Regardless of intent, once the data is out, it’s out. There’s no turning back, and the only resort is to respond. President Joe Biden has so far downplayed the severity of the recent Pentagon leak, but only time will reveal the truth. The point worth noting is how data, in the wrong hands, has the power to change the trajectory and magnitude of events over time, including warfare and its aftermath.

They say you should only focus on what you can control. By leaning into the early warning risk indicators of intent, federal agencies have an opportunity to turn the hose off before a leak occurs. They can “pro-act”, instead of “re-act”.

The Only Data is Actionable Data

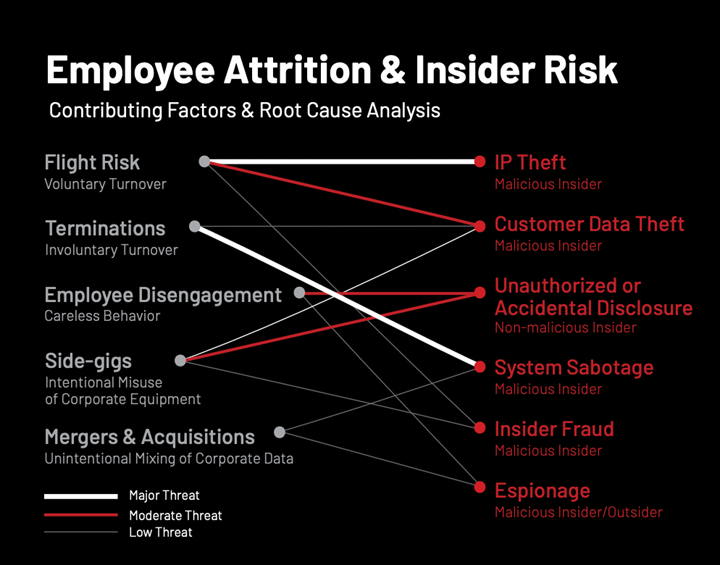

There are numerous tell-tale signs of insider risk; The DTEX i3 2023 Insider Risk Investigations Report identifies and links several specific early warning risk indicators with specific data loss events ranging from insider fraud and espionage to unauthorized disclosure and data theft:

What’s important to understand is that risk cannot be identified in isolation but rather as a result of aggregated cues derived from multiple data sets, including human resources and even open-source intelligence.

In the case of Teixeira, motive remains elusive. But regardless of intent, it would be interesting to look under the hood of the federal insider program: Was Teixeira on their radar? Were they aware that he was part of an online forum united by a mutual interest in guns, military gear and God? Did their data collection account for anomalies per peer group?

Viewed in isolation, these can appear as weak signals. But when multiple data from multiple sources are aggregated, a clearer more holistic picture of human risk emerges.

A successful insider risk program cuts across psycho-social and cyber-physical terrain to provide complete context that can be actioned early to identify and deter risk before the cat is out of the bag.

A data leak does not have to originate from a whistleblower to have far reaching impacts. Technology and research have evolved leaps and bounds since Obama’s EO. This includes ground-breaking research on insider threat indicator development by MITRE Corporation and proactive detection platforms like DTEX InTERCEPT.

It’s not enough to let history repeat itself time over. It’s time to readdress insider risk with a holistic lens that puts the human first.

Download the 2023 Insider Risk Investigations Report to learn more about the early warning indicators for insider risk, and the takeaways for establishing an effective insider risk program.

Subscribe today to stay informed and get regular updates from DTEX Systems